· on this page

Short answer: in 2026, Claude (Anthropic) is the best AI for writing and agentic coding; ChatGPT (OpenAI, now on GPT-5.5) is best for consumer features like image generation, voice mode, and Operator; Gemini(Google) is the cheapest at the API tier and best for long-context (up to 2M tokens) and multimodal reasoning. The three flagships are statistically tied on most aggregate benchmarks. The right pick depends entirely on what you're actually doing.

The full context: Claude, ChatGPT, and Gemini are the three LLMs most serious users actually pay for. Benchmark leadership rotates, whoever shipped last usually wins the press cycle. OpenAI shipped GPT-5.5 on April 23, 2026 (their first fully retrained base model since GPT-4.5, agentic-focused, $5/$30 per 1M tokens), which puts ChatGPT's flagship pricing on par with Claude Opus 4.7 ($5/$25). Anthropic's next-gen training run is rumoured for late Q2. Google ships Gemini point updates roughly monthly. In practice each model has carved out territory where it's the clear pick and territory where it genuinely isn't. This is the version that's true on the day it was written, not the marketing version.

Long answer below. We'll cover what each is objectively best at on current benchmarks, where subjective preference shakes out among heavy users, the pricing math, and five concrete scenarios with a specific recommendation per scenario.

At-a-glance comparison

| Dimension | Claude | ChatGPT | Gemini |

|---|---|---|---|

| Flagship model | Claude Opus 4.7 | GPT-5.5 (Apr 2026) | Gemini 3.1 Pro |

| Flagship input price / 1M | $5.00 | $5.00 | $2.00 |

| Flagship output price / 1M | $25.00 | $30.00 | $12.00 |

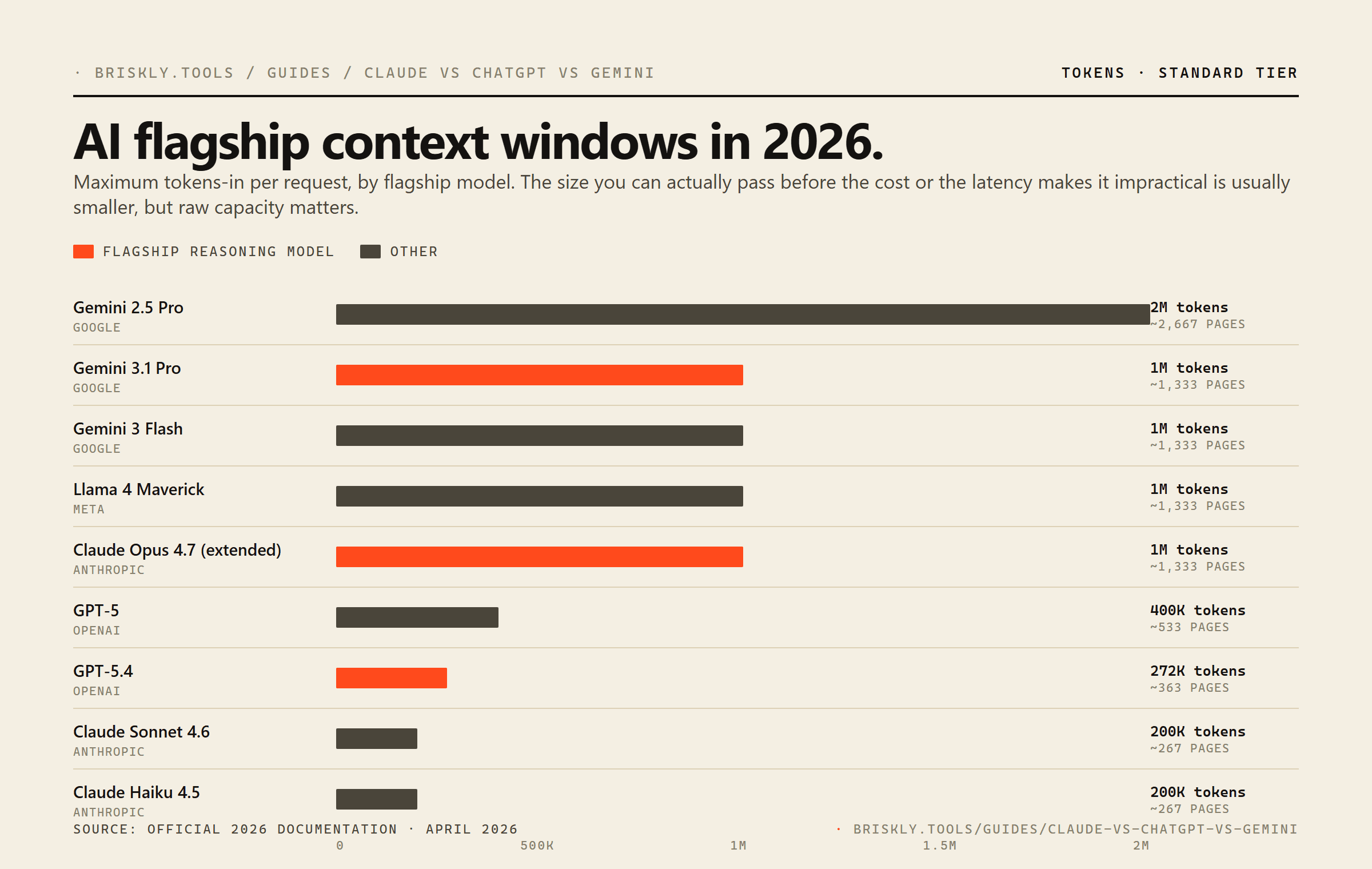

| Max context (flagship) | 1M tokens (extended) | 272K tokens | 1M tokens |

| Consumer subscription | Claude Pro, $20/mo | ChatGPT Plus, $20/mo | Gemini Advanced, $20/mo |

| Native web search | No (MCP / Projects only) | Yes | Yes (Google integrated) |

| Image generation | No | Yes (DALL-E integrated) | Yes (Imagen integrated) |

| Voice mode | No native voice | Advanced Voice | Gemini Live |

| Coding tool integration | Claude Code, Cursor, Zed | Codex CLI, Copilot | Gemini Code Assist |

| Extensibility | MCP (native), Projects | Plugins, MCP, Operator | Extensions, MCP |

| Writing quality (3rd-party evals) | Strongest overall | Strong, creative | Weaker prose, strong research |

| Coding quality (SWE-bench) | Strongest on agentic coding | Strong on algorithmic | Strong, improving |

Writing quality

Every third-party evaluation of pure prose quality, not benchmark scores, but blind human preference tests, has put Claude at the top since mid-2025. The gap is small on first-draft quality; it's larger on follow-through (the quality of a model's third paragraph, not just its first). Claude also has less AI-signature phrasing out of the box: fewer "delve," "tapestry," "in today's world" constructions, which means less editing to ship.

ChatGPT (GPT-5.5, launched Apr 23 2026) is close, with two genuine advantages: it's better at creative brainstorming and idea generation, and its style-adoption is more flexible when you feed it examples. For ghost-writing in a client's voice, ChatGPT is often the better tool.

Gemini 3.1 Pro is the weakest of the three on prose but the strongest on research-grounded writing, if the output needs to cite real sources and reflect current information, Gemini beats both thanks to native Google Search integration.

Pick: Claude for finished prose, ChatGPT for brainstorming, Gemini for research reports.

Best AI for marketing copy

Claude for landing pages, ad copy, email sequences, and anything that has to read like a human wrote it. The lower rate of AI-signature phrasing means less editing per shipped line. ChatGPT for variations and brainstorm, then port the strongest direction into Claude for finishing.

Best AI for technical writing

Claude wins for sustained technical writing (docs, runbooks, API references) because it holds context and tone across a long session. Gemini wins when the writing needs to cite live sources and reflect current state, native Google Search integration removes the source-hallucination problem the other two still have.

Best AI for ghostwriting in someone else's voice

ChatGPT. Style adoption from examples is materially better, GPT-5.5 in particular will absorb a tone from 2-3 sample paragraphs faster than Claude. Claude tends to drift back toward its own register over a long session; ChatGPT holds the borrowed voice longer.

Best AI for fiction and creative writing

Claude for sustained narrative (novels, novellas, multi-chapter arcs) where character voice and pacing matter. ChatGPT for short-form creative work, brainstorming plot beats, and anything that benefits from the higher stylistic risk-tolerance GPT-5.5 ships with by default.

Code generation

Claude leads here and it's not especially close for non-trivial work. On SWE-bench Verified (real GitHub issues), Aider Polyglot (multi-language edits), and Terminal-Bench (shell-based agentic coding), Claude Opus 4.7 has held top position through 2025-2026. The effect compounds in agentic settings, where the model needs to read, edit, test, and retry, because Claude's tool-use reliability (picking the right tool, recovering from errors) is better than its competitors. Cursor, Zed, Claude Code, and most production coding agents default to Claude for this reason.

GPT-5.5 (the new agentic-focused flagship) closed the gap meaningfully on Terminal-Bench 2.0 (82.7%) and OSWorld-Verified (78.7%), both agentic benchmarks. For algorithmic snippets and short one-shot generations, GPT-5.4 (now mid-tier at $2.50/$15) is still competitive and cheaper. For agentic coding over a large codebase, Claude still leads on session success rate, meaning cheaper-per-token alternatives often cost more per-completed-task because of retries.

Gemini 3.1 Pro has improved dramatically and is pragmatic inside the Google ecosystem (Android, Firebase, Google Cloud) where the models are tuned on. Outside that stack it underperforms Claude on agentic coding.

Pick: Claude for anything agentic or multi-file; ChatGPT for quick algorithmic snippets; Gemini if you're on Google Cloud.

Best AI for Python

Claude Opus 4.7 for production Python (data pipelines, web apps, ML scripts) where code has to actually run against a real codebase. GPT-5.5 is comparable on isolated algorithmic problems (LeetCode-style) and slightly cheaper at the new flagship tier. For Jupyter / notebook work specifically, both are roughly tied.

Best AI for JavaScript and TypeScript

Claude in agentic editors (Cursor, Zed, Claude Code), particularly for full-stack TypeScript work that touches both backend and frontend. The type-system fluency is a noticeable lead over GPT-5.5 on complex generic inference and React 19 patterns.

Best AI for code review

Claude. The catch-rate on real bugs (logic errors, security issues, edge cases) is higher than GPT-5.5 or Gemini 3.1 Pro in head-to-head reviews on the same diff. ChatGPT tends to over-flag style nits; Gemini tends to under-flag subtle issues. For PR review automation in CI, Claude is the default.

Best AI for agentic coding (Cursor, Claude Code, Codex)

Claude Opus 4.7 by a measurable margin. The gap shows up in session success rate, what percentage of multi-step coding tasks complete without human intervention. Higher per-token cost ends up cheaper per completed task because of fewer retries. Cursor, Zed, Claude Code, and most production coding agents default to Claude for this reason.

Reasoning and research

On aggregate reasoning benchmarks (GPQA Diamond, MMLU-Pro, AIME), all three flagships are statistically indistinguishable, they're at the frontier together. The differences come down to what kind of reasoning:

- Math / formal logic: GPT-5.5 has a slight edge, especially with its reasoning mode (84.9% on GDPval). The o-series lineage (o1 → o4-mini → GPT-5.5's reasoning fork) has been consistently strong on structured problems.

- Multi-step tool-use reasoning: Claude Opus wins. When reasoning is gated by picking the right tool, reading its output, and continuing the chain, Claude's reliability stacks up over long sessions.

- Multimodal reasoning (image + text): Gemini 3.1 Pro wins. Native multimodal training makes it stronger at combined vision + reasoning tasks.

- Live-web research: ChatGPT Deep Research or Gemini with Google Search. Claude has no native web; you have to pipe external data in via MCP (see our MCP primer) or Projects.

Pricing (the part that actually matters)

For consumer use, all three sit at $20/month for their flagship-access plan. Differences at that tier come down to usage limits and bundled features, not capability. If you're deciding between subscriptions, the right move is usually to trial each for a week and pick the one you actually reach for, most users develop a preference quickly.

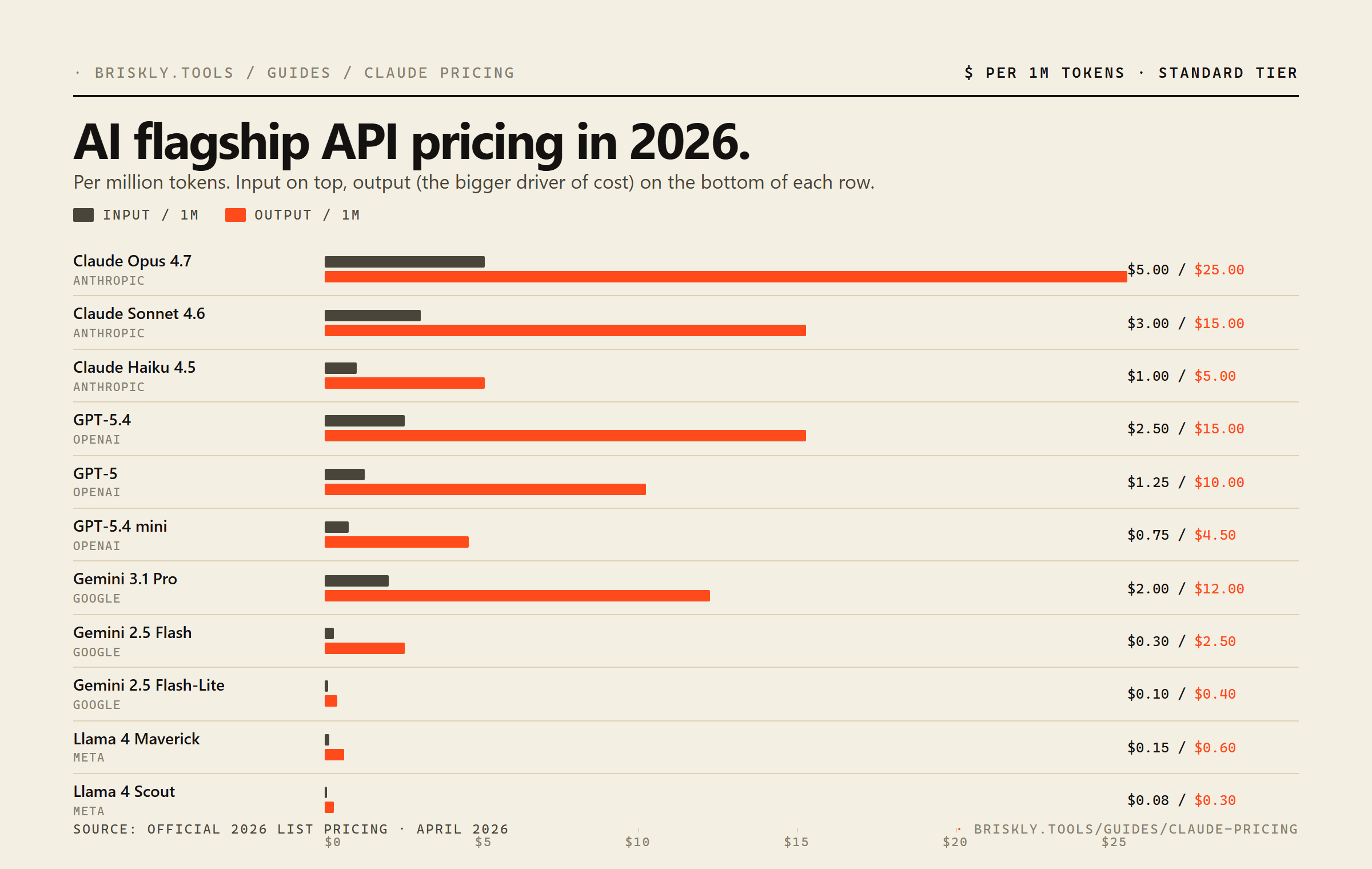

For API / developer use, the gap is meaningful. At the flagship tier:

- Claude Opus 4.7: $5 input / $25 output per 1M tokens

- GPT-5.5 (new flagship, Apr 2026): $5 input / $30 output per 1M tokens

- GPT-5.4 (now mid-tier): $2.50 input / $15 output per 1M tokens

- Gemini 3.1 Pro: $2 input / $12 output per 1M tokens

Gemini is cheapest per token at the flagship tier, Claude and GPT-5.5 are matched on input ($5) and Claude wins on output ($25 vs $30). The previous flagship GPT-5.4 (now demoted) is still the cheapest serious OpenAI option at the mid-tier. For high-volume workloads where quality is fungible, Gemini's cost advantage compounds fast. For workloads where the top-tier quality edge actually matters, agentic coding, sustained writing, the higher rate usually pays for itself in fewer retries.

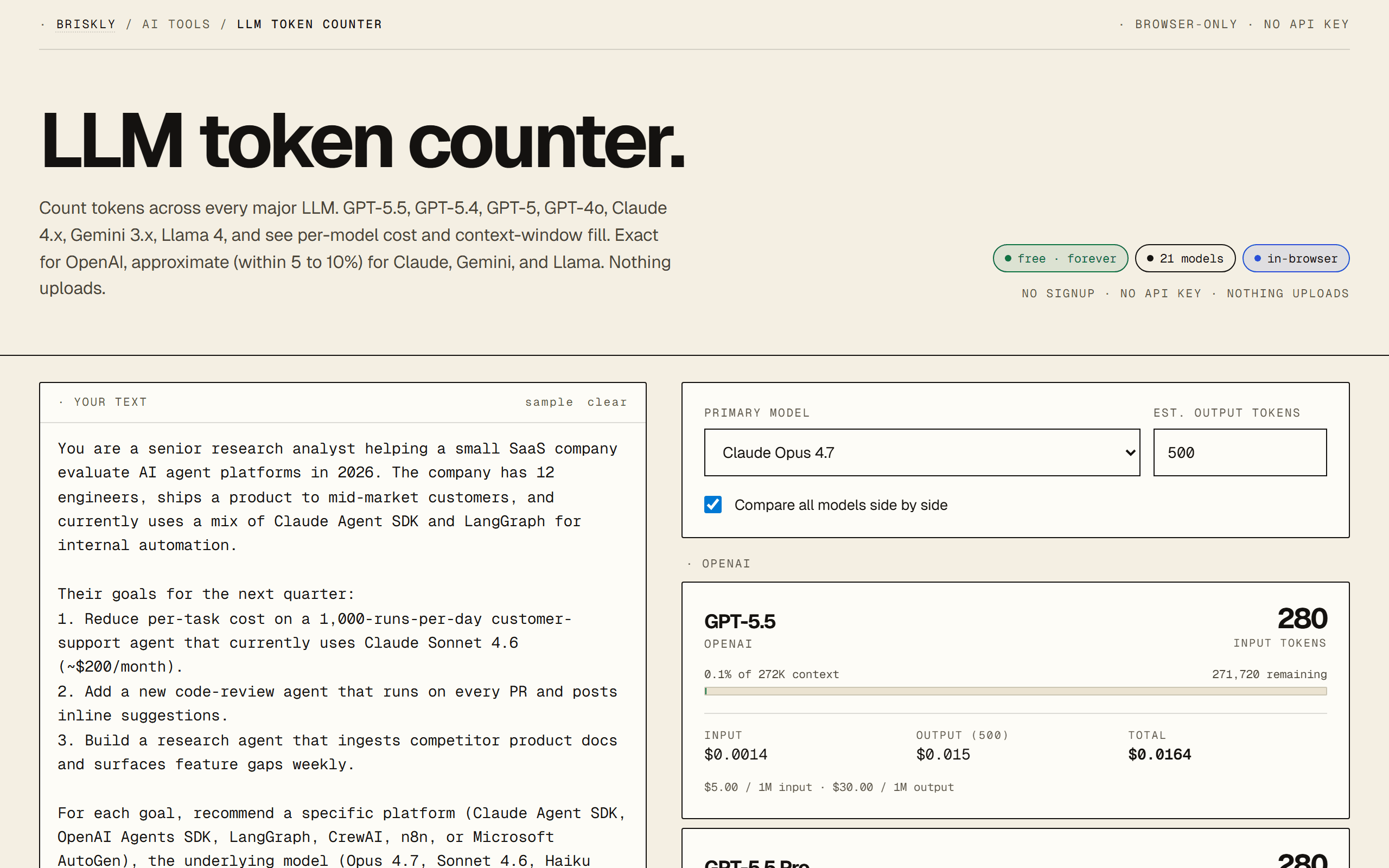

We have a full Claude pricing breakdown with per-workload math, and the LLM token counter runs exact per-model costs on your own prompt across all 21 major models at once.

Five scenarios, a pick for each

Scenario 1. Best AI for longform writing

Pick Claude. Sonnet 4.6 as your daily driver; Opus 4.7 for the deep-research pieces. The prose comes out needing less editing than ChatGPT or Gemini, and Claude's tone control is more consistent across a long session.

Scenario 2. Best AI for building a consumer app

ChatGPT (GPT-5.5, GPT-5.4 mid-tier, or GPT-5 mini via the API). GPT-5.5 for premium experiences, GPT-5.4 if cost matters and you need flagship-tier quality, GPT-5 mini for the cheapest credible OpenAI tier. The ChatGPT brand pulls weight with non-technical end users. If cost is the primary driver, fall back to Gemini 2.5 Flash.

Scenario 3. Best AI for agentic coding and automation

Claude Opus 4.7. Not close. Session success rate on multi-step coding tasks is measurably ahead; the higher per-token price is cheaper per completed task once you factor retries.

Scenario 4. Best AI for long-context (500K+ tokens)

Gemini 2.5 Pro (2M context) or Claude Opus 4.7 (1M extended context). Gemini is cheaper per input token; Claude is better at synthesizing across the full context. Test both on a sample and pick.

Scenario 5. Best AI subscription for a solo operator

Claude Pro. Best writing, best code, adequate everything else. If you're paying for ChatGPT Plus purely for voice mode or image generation, those are genuine wins ChatGPT has that Claude doesn't, so Plus is defensible, but most people's daily-driver work overlaps more with Claude's strengths.

The honest qualifier

All three of these companies ship updates weekly. A blog post that says "X is the best" has a shelf life measured in months. What's durable is the pattern of strengths: Anthropic optimizes for sustained quality and reliability under tool use; OpenAI optimizes for consumer reach and broad feature parity; Google optimizes for scale, integration, and multimodal. Those positionings have been stable for two years and probably will be for another two.

What that means for you: if none of the three is currently perfect for your use case, wait a quarter and re-evaluate. One of them will ship something that closes the gap. There's no moat here big enough to deserve brand loyalty.

FAQ

Is Claude better than ChatGPT?

For sustained writing, code generation on complex projects, and agentic tool use, Claude Opus 4.7 has a measurable edge in third-party evaluations. For image understanding, voice mode, plugins/MCP breadth, and the ChatGPT consumer product (Advanced Voice, Operator, Canvas), ChatGPT (GPT-5.5, launched Apr 23 2026) is ahead. GPT-5.5 narrowed the gap on agentic benchmarks specifically (Terminal-Bench 2.0 82.7%, OSWorld-Verified 78.7%), making it more competitive for tool-use workloads. On pure one-shot answers to typical questions, they're indistinguishable to most users. "Better" depends entirely on what you're doing.

Is ChatGPT or Claude cheaper?

The API is priced per-token; the consumer subscription is flat-fee. On the new flagship tier (Apr 2026), GPT-5.5 ($5/$30) and Claude Opus 4.7 ($5/$25) match on input but Claude is $5 cheaper on output. The previous flagship GPT-5.4 (now mid-tier at $2.50/$15) is still 50% cheaper than Opus on input but more expensive on output, so the right comparison depends on your input:output ratio. On the $20/month consumer plans (ChatGPT Plus vs. Claude Pro), both include comparable message limits and access to their flagship models, it comes down to which model you prefer.

Which AI is best for coding?

Claude Opus 4.7 leads most independent coding benchmarks (SWE-bench Verified, Aider polyglot, Terminal-Bench 2.0) and is the default choice in Claude Code, Cursor, Zed, and most agentic coding tools. GPT-5.5 closed the gap meaningfully (Terminal-Bench 2.0 82.7% to Opus's similar tier) and is a credible alternative for new agentic-coding stacks. GPT-5.4 and GPT-5 are close on pure algorithmic problems and when the coding task doesn't need to reason about long codebases. Gemini 3.1 Pro is competitive and cheaper if you're working within Google's stack.

Which AI is best for writing?

Claude has consistently ranked highest on third-party writing evaluations through 2025 and 2026, with Sonnet 4.6 being the most common choice for longform content because it writes with less AI-signature phrasing than competitors out of the box. ChatGPT is close and wins on creative brainstorming and idea generation. Gemini is the weakest on pure prose quality but is strong on research-grounded writing because of its integration with Google Search.

Which AI is best for research?

ChatGPT with Deep Research and Gemini 3.1 Pro (which integrates Google Search natively) are both stronger than Claude for live-information queries. Claude has no native web search, you have to pipe it through an MCP server or use the Projects feature. For offline research over documents you've provided, Claude's 1M context window and reasoning quality make it the most thorough.

Which AI is the smartest?

On aggregate reasoning benchmarks (GPQA Diamond, MMLU-Pro, AIME) as of April 2026: Claude Opus 4.7, GPT-5.5, and Gemini 3.1 Pro are statistically indistinguishable at the top. GPT-5.5 edges ahead on pure math and agentic tool use (GDPval 84.9%); Claude Opus wins on multi-step reasoning with structured tool calls; Gemini 3.1 Pro wins on multimodal reasoning. There is no single "smartest", they're at the frontier together, and each has local strengths.

Should I use Claude or ChatGPT for work?

If your work is document-heavy, writing-heavy, or code-heavy, Claude is usually the better daily driver. If your work needs live web search, voice interaction, image generation, or integration with ChatGPT plugins, ChatGPT wins. For a team-wide pick, Claude's longer context (1M for Opus) makes it better for large-document workflows; ChatGPT's Operator and Canvas features make it better for mixed-media workflows. Many experienced users pay for both.

Which AI has the longest context?

Gemini 2.5 Pro with 2M tokens, then Gemini 3.1 Pro / Gemini 3 Flash / Llama 4 Maverick / Claude Opus 4.7 (extended) / GPT-5 at 1M, then GPT-5.4 at 272K and Claude Sonnet / Haiku at 200K. For ingesting entire codebases, large contracts, or long transcripts, Gemini still wins on raw capacity.

Can Claude, ChatGPT, and Gemini all access the internet?

ChatGPT and Gemini can, natively. Claude cannot directly, but via MCP servers, connected apps, or the Projects feature, Claude can reach external data sources. The practical difference: ChatGPT and Gemini are point-and-click for live queries; Claude requires one-time integration but then has more control over what it reaches.

Which AI is free?

All three have free tiers. ChatGPT Free: GPT-5 mini with daily limits. Claude Free: Claude Haiku 4.5 with daily limits. Gemini Free: Gemini 3 Flash with very generous limits through Google One and direct. For serious use, paid tiers are effectively required. Worth testing all three on the free tier first, most users develop a preference after a few days.

Related

- · Claude pricing in 2026, compared, the full per-workload cost math.

- · LLM token counter, paste a prompt, see cost across all 21 models.

- · MCP server primer, how to give Claude native web access.

- · Claude Design: first look. Anthropic's brand-system generator, reviewed.